Our Software Development Process: Testing and Quality Assurance

January 29, 2016

This blog post is part of our series on Augment’s software development process. This post is on the super exciting topic of testing and quality assurance. Check out our other posts: The Ideation Phase, Gathering Basic Requirements for a Proposal, The Proposal, Gathering Requirements, and Creating a Backlog and Planning Sprints. People new to the software world do not appreciate the importance of testing. It is a huge part of any project. There are always bugs to be found and work flows to improve. We try to avoid as many as possible but bugs are inevitable.

So how do we test software? There are two main ways to test software: manual and automated. Manual testing is appropriate for new product development. At some point when a company has a more stable version of their software product (website, mobile app, product), then it could make sense to write an automated test. We generally use Selenium or Geb. They both have a nice browser automation framework.

Let’s first talk about manual testing. We do manual testing for new product development. What is manual testing? It is when a person sets up and executes a test plan to test for bugs. For example, if I enter DER@.com into an email form, the form should not be submitted and an error message should appear.

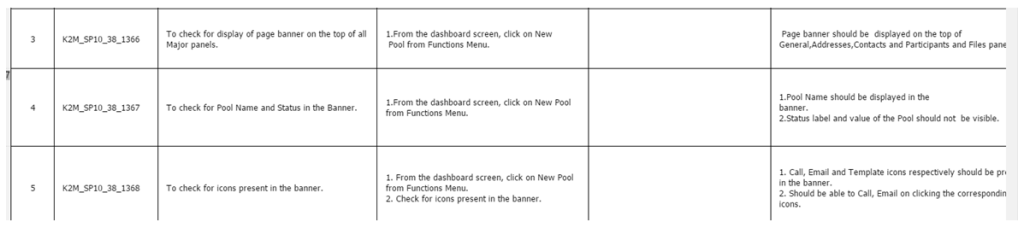

How do we do put together a manual testing plan? Generally our QA analysts (testers) take the requirements document (which we discussed in more detail in a previous post), or even something as simple as wire frames, and write test cases.

We usually use Excel to write down and track all the manual test cases. In a typical 2 week sprint there could 60-100 test cases written, tested and tracked.

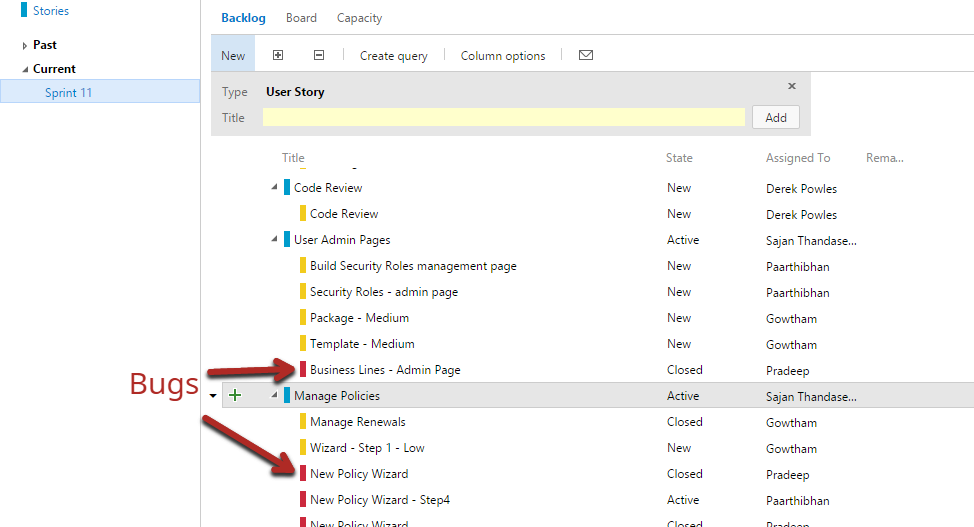

What happens when a bug is found? The bug is logged into our project management software, often VSO or Jira. This bug is then tracked and added to the following sprint to be fixed. Once fixed it is retested. Once the bug has been fixed, it is marked completed in VSO or Jira.

For automated testing, it is a different process. We still have to write up a detailed test plan. But instead of having a human run through each step manually (does the email form accept the proper format) a script (code) is written to have a software robot run through the entire script.

It is actually cool to watch because the script takes over the browser and starts going through the different steps, typing in text, navigating to different pages. It is like someone took over your browser and that is because someone did, the robot software script.

To write these scripts we rely on browser automation frameworks like Selenium and Geb. These frameworks have tools that make it easier to command the software robot to do certain actions (like click on this product link) in the browser. And this can be done across many different browsers (Chrome, Safari, IE [Edge], Firefox).

At what point does it make sense to move from manual to automated testing? Generally if you are going to do the test over and over again, it makes sense to automate. You could add the number of hours spent doing the manual test each year and compare that to the cost of developing an automated test. Over the years, if you are testing weekly, then it probably makes sense to automate the test. Even monthly testing could be enough to justify automating the testing.

After we test the software developed in a sprint then we move that code over to the user testing server. There the user/client would go through their own testing and provide feedback on any issues or bugs they found. These bugs are incorporated and fixed in the following sprint.

Related posts

Curious about CI/CD… what it means and why you should care about it?

Augment’s got you covered! You may have heard the term “CI/CD” thrown around in software development discussions and internal meetings, but it’s not frequently discussed as to “why” it matters. CI/CD stands for Continuous Integration and Continuous Delivery (or Deployment, depending on the team). It is a set of practices that helps teams deliver code …

Introducing Auggy AI: A Conversational AI Assistant

Embracing AI sounds easy but it’s often hard to know what and how to implement AI. To that end, we built an internal custom AI assistant. Our AI assistant Auggy is built to respond accurately to questions regarding our internal policies, manage project tasks, and provide updates on JIRA, to create, and view events, allowing …

Why AI

Why are we excited about AI? There is definitely a lot of hype around AI. The hype is exciting but also deafening sometimes. Everyone feels the pressure to build and engage with AI. It’s magical, life changing. That’s kind of all true. But there is a lot of work to be done to achieve that …